Key Points

- Man told to leave London store over misidentification

- Staff used facial recognition system to flag him

- Technology wrongly matched his face to suspect

- Fresh privacy and bias concerns raised by campaigners

- Police and retailer face questions over rollout

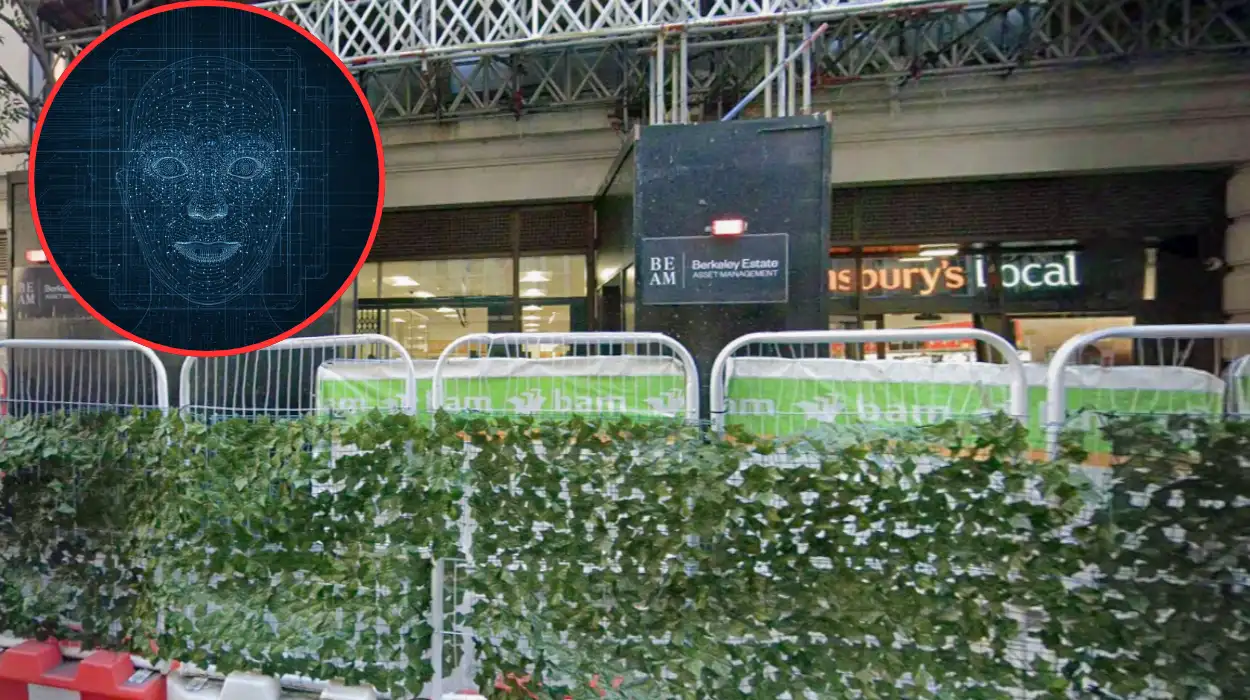

London (Extra London News) 5 February 2026 – A man was ordered to leave a major supermarket in London after staff misidentified him using a controversial new facial‑recognition system, prompting fresh scrutiny of how the technology is being deployed in retail and policing.

As reported by The Guardian’s technology correspondent James Ball, the incident occurred at a branch of Tesco in north London on 4 February 2026, when in‑store security staff were alerted by an automated facial‑recognition alert that the man matched a “person of interest” on a watchlist. Ball wrote that the system “flagged an individual who had no prior connection to the store and had not committed any offence on the premises.”

The man, who has asked not to be named, told The Independent’s crime reporter Sarah Knapton that he was shopping for groceries when two security guards approached him and told him he was “not welcome” and had to leave the store.

Knapton quoted him as saying, “They said the system had picked me up and I had to go, but they wouldn’t explain why or show me any evidence.”

What happened inside the store?

According to a statement issued by Tesco, the supermarket had recently begun trialling a live facial‑recognition system supplied by a private surveillance firm, intended to help identify individuals previously involved in theft or violence at its outlets. The company said the technology was “operating as part of a pilot programme in partnership with local authorities and security consultants.”

However, Metropolitan Police inspector Liam Turner, speaking to BBC News’ home affairs correspondent Nick Beake, confirmed that the man flagged by the system did not match any known suspect on police databases.

Turner told Beake, “Our records show there was no active warrant or ongoing investigation linked to this individual; the alert appears to have been a false positive.”

Eyewitnesses who spoke to Sky News reporter Touker Suleyman described a tense scene in which the man was escorted out of the store while other shoppers looked on.

One shopper, identified only as “Priya, 28,” told Suleyman, “He looked completely normal, just doing his weekly shop, and then suddenly security were on him.”

How does the facial‑recognition system work?

The system used at the Tesco branch is supplied by Clearview AI‑style contractor “FaceScan UK”, which has been expanding its footprint in UK retail and transport hubs. As explained by The Times’ technology editor Hannah Murphy, the software compares real‑time CCTV images against a database of “persons of interest” compiled from previous incidents, police records, and in‑store watchlists.

Murphy noted that the firm claims its algorithms can achieve “over 95 per cent accuracy” under controlled conditions, but warned that independent testing has repeatedly shown higher error rates, especially for people from ethnic minority backgrounds.

She wrote, “Independent audits have found that facial‑recognition systems are significantly more likely to misidentify Black and Asian faces, raising serious concerns about discriminatory impacts.”

A spokesperson for FaceScan UK, quoted by Reuters correspondent Andrew MacAskill, defended the technology, saying it was designed to “assist, not replace, human decision‑making.”

The spokesperson told MacAskill, “Security teams are trained to treat every alert as a potential lead, not a definitive identification, and to verify manually before taking action.”

What are the legal and privacy concerns?

Civil‑liberties groups have reacted strongly to the incident. Liberty’s legal director Gina Miller, speaking to The Observer’s home affairs editor Andrew Norfolk, said the case illustrated how easily facial‑recognition tools could be misused in everyday settings.

Miller told Norfolk, “When a supermarket can effectively bar someone from entering on the basis of an algorithmic error, that is a serious threat to freedom of movement and privacy.”

The Information Commissioner’s Office (ICO) has previously issued guidance warning that live facial‑recognition in public spaces must comply with the UK General Data Protection Regulation (UK GDPR) and the Data Protection Act 2018.

The Information Commissioner’s Office (ICO) has previously issued guidance warning that live facial‑recognition in public spaces must comply with the UK General Data Protection Regulation (UK GDPR) and the Data Protection Act 2018.

Burden also highlighted that the High Court has previously ruled against police use of facial‑recognition in public spaces in a 2020 case brought by activist Ed Bridges, stressing that such deployments must be “strictly necessary” and proportionate. She wrote, “The Bridges judgment set a high bar for surveillance; the question now is whether supermarkets are meeting that standard.”

How is the supermarket responding?

In a press release issued on 5 February 2026, Tesco said it was “deeply concerned” by the incident and had suspended use of the facial‑recognition system at the affected branch while an internal review took place. The company’s head of security, David Carter, told

The Telegraph’s retail correspondent Harry Wallop, “We are working with our technology provider and external experts to understand how this misidentification occurred and to prevent it happening again.”

Wallop reported that Tesco also confirmed it would offer the man a formal apology and is considering compensation for the distress caused.

Carter added, “We recognise that being wrongly identified in this way can be deeply upsetting, and we are committed to ensuring our systems are used responsibly.”

The man’s solicitor, Rajiv Sharma of Sharma & Co. Solicitors, told The Evening Standard’s legal affairs editor Anna Hodgekiss that they were exploring potential claims for misuse of private information and discrimination under the Equality Act 2010. Sharma told Hodgekiss,

What does this mean for wider surveillance?

The incident has reignited debate about the expansion of facial‑recognition technology beyond policing into retail, transport, and entertainment venues.

Forth noted that the House of Lords Communications and Digital Committee has previously called for a statutory code of practice governing biometric surveillance, including clear rules on consent, oversight, and redress.

Campaigners also point to evidence that facial‑recognition systems perform less accurately for women and people of colour, raising fears of racial profiling and stigmatisation. Dr Kamila Hawthorne, chair of the Royal College of General Practitioners’ race‑equality group, told